Jim answers questions from fellow Drillers

(More questions with answers here, Work Overview here, Index of concepts here)

Q: I’m working with IT on building a new database and at the same time hope to build in new query capabilities to allow me to understand our customer lifetime value and build it accordingly. I’ve been tasked with putting some math examples together for IT so they can understand what I’d be looking at and how CLV would be calculated.

A: I’m sort of lost on this because it sounds to me like you are looking to forecast LTV; reality is that LTV is really only known after the customer has defected. So, you can go back in time and say “the LTV of customers acquired through this campaign is X” a year or two after the fact and use that info going forward in the business.

Q: In addition to your Drilling Down book, I also have read Sunil Gupta’s “Managing Customer as Investments“. One thing that stands out for me is that Gupta stressed the importance of allowing for a discount rate and I don’t see any mention of discount rate in the examples your provide in your book.

A: Yes, a case in point for my comments above. The reason I don’t mention NPV is I favor a relative LTV approach; I just think it’s more practical, and in my experience management is not really interested in the NPV approach when discussing Marketing, they want to know about “cash I make now”, not cash you are forecasting in the future.

Said another way, to use NPV you have to have something to discount to the present, meaning you are making a forecast of absolute LTV, and this forecast will probably be wrong because there are too many unknowns that will occur, at least in B2C.

Q: This is the request from my IT rep….which leads me to believe that she may have missed that Absolute vs. relative LTV conversation which we had – or maybe I’m missing something. Once I have these examples we will be ready to begin our modeling. Here is the formula I was going to provide IT for LTV:

(bunch of equations and data)

A: Again, far from me to play IT expert here, but what I would concentrate on when creating a new database is making sure you have all the data you need, making the acquisition of that data as clean as possible, and creating a database that is as flexible as possible. I don’t really understand why you need to provide any “LTV formula”, because if the data and structure are right, you can run any formula / query any time you want. You can both go back in time and forward in time, and use different equations for each, the big difference being back in time is “actual” and forecast is “predicted” and adjusted with NPV.

What I am thinking here is there may be some confusion on both sides about the difference between using customer data / valuation for Strategic versus Tactical purposes, so perhaps some more info on that will help you and IT communicate on this project more effectively.

A Strategic use of customer valuation would probably be fairly stable and something that could have static formulas created for reporting purposes at the CEO level. You would probably use the NPV approach and forecast absolute (a number) LTV.

For Tactical use of customer valuation in campaign management and driving Marketing ROI, you are better off not messing around with NPV and looking at LTV from a relative (is it getting better or worse) perspective. Let’s see if I can give you some practical examples of what I mean.

The Gupta formula:

CLV = m(r/1+i-r)

where:

m=customer margin or profit per period (year)

r= retention rate

i=discount rate

is a marvelous macro equation to describe LTV when you are trying to make strategic customer investment decisions.

He also provides modifications to this model for different scenarios where retention rate or profit are variable, with a final result that on average, the LTV of a customer is between 1 and 4.5 times the current period profit.

This is probably shockingly low to some people, but you have to realize that at a 60% defection rate only 2.8% of customers are left after 7 years; put another way, with a 12% discount rate and 90% retention, the multiple on current period profit is just 4 for LTV.

This is a perfectly competent analysis and especially powerful in it’s simplicity; I use this kind of thing when talking to CFO’s, CEO’s and other financially-oriented, strategically-focused individuals. The plain fact of the matter is because of the discount rate, any arguments about “how long a customer is a customer” become moot, because the incremental value of the out years is very small. This realization generally kicks C-Level people in the gut and gets them thinking about “doing something”.

Strategic customer value, in case it’s unclear, would be used for decisions like this:

* What lines or services should we add?

* What marketing mix is optimal?

* Should we implement a loyalty program?

Formulas like Gupta’s are also often used to value companies for sale or acquisition.

However, on a practical, tactical level, we know the dangers of making database marketing decisions using the “average customer”, knowing there is a lot of behavioral skew, and that there is a huge profit difference between best and not so good customers.

So, when you’re developing targeting ideas and just trying to see where you make the most money so you can optimize customer campaigns, I think his model is really overkill.

When I look at campaigns, I basically look at “breakeven” – will the net margin after all costs pay for the customer acquisition or the incremental sales generated from the customer as a result of the campaign? Once the customer segment hits breakeven, then it’s all upside profit from there, right? As long as you don’t do something to destroy value (which is certainly possible), LTV should remain the same or increase and since you are past breakeven, financial liability is zero.

And that’s when you start looking at the relative value – one segment beats breakeven by $2 per customer, another beats by $20 per customer, then all else equal, I invest more in the campaign that beats by $20 and let it go – it really doesn’t matter what the end or “terminal” LTV of the customer is. I made the right decision by allocating towards higher ROI so I don’t need to know absolute LTV, do I? I’m in the black.

Put another way, I’m optimizing a system, trying to make it better and better at generating profits. The end, absolute numeric value number is really not relevant to me. If what I’m doing is always adding incremental value, absolute LTV will take care of itself and can be measured as a separate exercise – if you have resources.

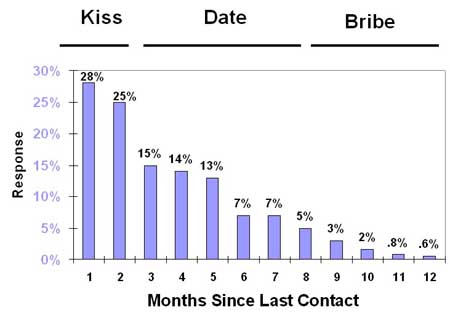

Next, we know these relative valuations can change over time. To monitor further, you use a simple “sales to date” analysis to see if LTV is still growing and a “% Recent” index to predict if sales will continue to grow. Based on this longer-term idea, you make further adjustments to your campaign allocation.

So for example, let’s say the net $2 customers above are late bloomers and the net $20 customers above are fast starters; you do this sales to date / Recency analysis at 3 months or 6 months and find out the $2 folks are now $30 with great Recency and the $20 folks are still $20 with terrible Recency. So you adjust your campaign allocations to reflect this change based on the real data, not a forecast that has been discounted with NPV.

I mean, you have the actual data, why mess with a forecast?

The above is an example of why I’m not clear on why a “formula” needs to be solidified or why you would want to strap yourself into that kind of inflexibility – unless, of course, there is something going on system-wise that I am (obviously) not competent to comment on. None of these queries are complex formulas, but you do need all the source data to keep track of the campaign populations and go back and query them. That’s why I stressed the data and flexibility above.

Now, if we’re talking about a resource issue, as in you live in a is a “set it and forget it” type of environment where the ability to run custom (though very simple) queries on the data is lacking, and you need a “formula” approach, I would use the LifeCycle Grids from my book to replicate the idea above. If you don’t recall this idea, the Grids are similar to the pix I used in this blog post.

Once the data collection and output for one of these Grids is programmed, all you need is the ability to run a Grid for each campaign – and that should be super-easy to set up, even as a self-service thing. For example, you go into a menu system and select:

Run LifeCycle Grid –> Choose Campaign Code –> Choose Date Range

and run your own reports. They could be set up to run overnight or on weekends if system resources are an issue.

Then, you could just visually compare the Grids or have them output to spreadsheets and perform calculations on them. It should be very obvious which campaigns generate higher relative LTV using this method – over both the short term and the long term. If dollars are more important than number of transactions, use dollars instead of Frequency in the Grids.

If you really want to get to a “net net net” LTV number (and you should!), have that number carried in the database cumulatively as opposed to created in the query each time and use that number instead of Frequency in the Grids. This further reduces the need for custom work. In other words, if the system knows margin and cost of campaign, have the net net net calculated during updates and stored in the customer record.

Basically, all you need to know is the margin on the product and the cost of all campaigns, which would come from a promotional history table – cost of all promotions (and any discounts) the customer received. This net LTV number could be updated on a weekly or monthly basis. If you want extra credit, also net out the cost of customer service calls using the customer service history or use an average per customer during updates. Then when you run the Grids, you will be working with net cash, which is always a good thing.

Look, I think the Gupta book is fabulous and have reviewed it on my blog. But you always have to ask yourself, why am I doing this analysis, and what kind of action will be taken? His book is largely about strategic customer analysis (the idea of a “portfolio” of customers), and I’m guessing you are looking for something more tactical because of your focus on campaigns in the data.

His approach is long-term and planning oriented; mine is short-term and results oriented. Neither conflicts with the other, they operate on different time horizons for different purposes, and ultimately the short-term tactical results for relative LTV should roll up to the long-term strategic forecasts of absolute LTV.

That is, if the forecasts are accurate!

Jim

Get the book at Booklocker.com

Find Out Specifically What is in the Book

Learn Customer Marketing Concepts and Metrics (site article list)

Download the first 9 chapters of the Drilling Down book: PDF