…Inches Closer to Reality, according to this article in Optimize Magazine. See also how Microsoft wants to make BI “Ubiquitous” in this article from Information Week.

Â

Â

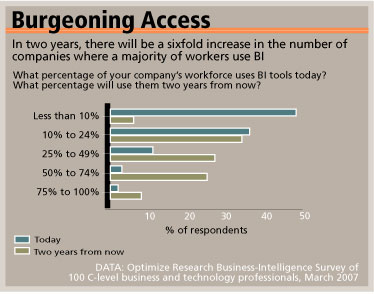

(graph above from Optimize Magazine article)

Now, I generally think giving people access to data is a good thing, but I’ve seen it go horribly wrong in many cases. You can’t just open up data access and let people construct analysis without ground rules. You’ll have complete chaos, with people torturing the data in whichever direction best makes their case.

Rather, follow a couple of simple rules to prevent analytical chaos:

1. Please set up a “best practices” team or “center of excellence” concept so people who want to do honest analysis on their own can get help with the simple stuff. If you have experienced endless cycles of re-analyzing the same problem over and over with the business side, you know what I am talking about here. People need help really tightly defining the questions they are trying to answer and guidance on what the best (most accurate?) way to generate answers is.Â

Do these new analyzers know how seasonality affects the business? Do they understand how time-frames can distort an analysis? How cycle billing or lag times in fulfillment can affect sales recognition? Are they aware of any data quality issues?Â

2. If you can’t set up a best practices team, at least try to create a best practices manual / library of some kind.

3. Please, please define all the data people will be using. Some of this definition problem you can control with the drop-downs you provide, but make sure everybody knows specifically what the drop-down items mean. For example, if someone is doing a “customer count” of some kind, what is a customer, who is included in that count? If someone ordered and then cancelled - no net positive financial transaction ever took place - will that person be included in a “customer count”? If not, what is that person called, what is their “status”? Is that status available as a drop-down selection?

If they select “Sales” as a variable, are we talking about Gross Demand? Net of cancels / returns? Net of discounts? Net of bundling, packaging, volume pricing?

4. If an analysis will be used for strategic decision making, it must be “vetted” first by the best practices group. If people want to make “local” or silo decisions based on their own analysis, well, they are making their own bed. But when it comes down to major shifts in business practices, you simply cannot rely on a local analysis, there is too much risk involved.

You really have to think some of this stuff through first so you don’t end up in a meeting with the CEO where 3 different people present data that should be consistent but end up divergent.  That’s the fastest way to induce a full-on beating CEO forehead vein I know of.Â

I am all for people being “exploratory” and going through their own “what if?” kinds of exercises as they try to discover more about their products, services, or customers. This is a good thing. But confusion and chaos due to lack of standards and a central authority on the validity of an analysis is a great way to doom this kind of effort.

Anybody else been through one of these “everybody in the data pool” rollouts? Do you have any other “rules” you would like to add to help people get through one of these implementations successfully?

Imagine for a moment that you are a C-level business executive (not technology) in a medium to large organization. Now think about how many people in your firm use BI tools. You can’t, can you? Because you HAVE NO IDEA how many people use BI tools.

I also seem to recall a survey done in 2005 that predicted that by 2007 there would be a sixfold increase in the number of firms where half or more of employees used BI tools.

Or was that a 2003 study predicting 2005 use?

Regardless of the validity of the survey, your prescription is right on, Jim — up to #3. Vetting strategic analyses through a best practices team just isn’t going to happen in most firms.

Ron, given we agree on what a “strategic analysis” is, show me a company that is willing to trust this kind of analysis to a Director-level silo player without some kind of vetting process and I’ll show you a company that doesn’t really manage by the numbers.

In other words, they’re going to ignore the analysis and go with their gut anyway – unless, of course, the analysis supports what the (ample?) C-Level gut already says.

If that’s the case, “company-wide BI” is a huge waste of time and effort.

I’m not sure we disagree what a strategic analysis is — but we didn’t define it. So that’s where our not-meeting of the minds probably lies. Not only would I be worried to see a company trust a strategic analysis to a “director-level silo player”, I’d be worried to see a company force that analysis to be vetted by a bunch of “director-level, metadata modeling, techno-BI geeks”. No insult intended. In that case — the strategic analysis was probably a huge waste of time and effort.

I think we’re in synch. If I define a “best practices analytical team” as headed by a Chief Analytics Officer reporting directly to the CEO as here:

http://blog.jimnovo.com/2007/02/14/medium-metric/

does that make you feel any better about the “vetting” thing? Sorry if I was a bit vague on this, what I was trying to say is if you are going to roll out a company-wide BI initiative, you must have some kind of “3rd party” analysis verification team at a very high level to make it really work; otherwise, you will get analytical chaos as everybody tries to torture their own analytics in their favor.

I do not believe that adding more users to existing BI tools will add enough value to justify it’s costs. In fact, when I hear about companies adding more and more users to their BI tools I have to ask why? (as I did here – http://www.edmblog.com/weblog/2006/04/users_look_to_o.html – when I read about companies providing BI to their custoemrs and partners).

You need your business to make better decisions. If all your decisions are made manually by people who can, eventually, learn to use BI tools then perhaps adding more users will help. But might it not be more effective to stop giving people reporting tools and instead make their systems smart enough to be useful?

JT

http://www.smartenoughsystems.com